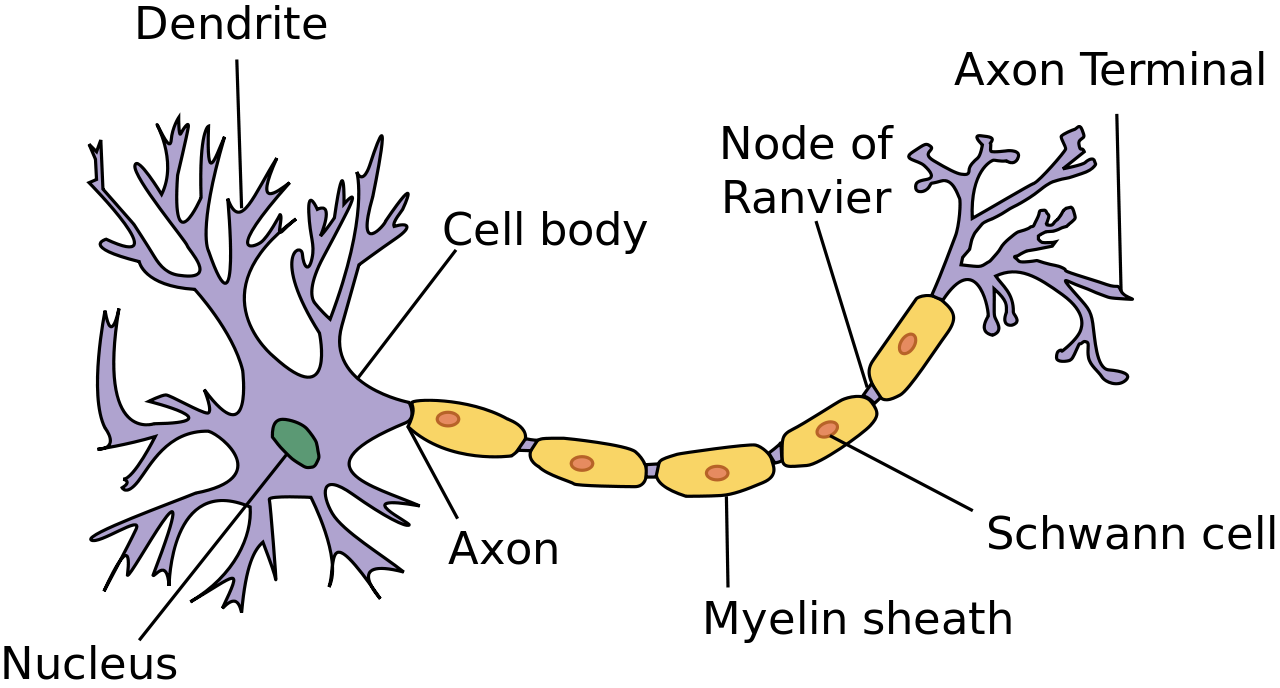

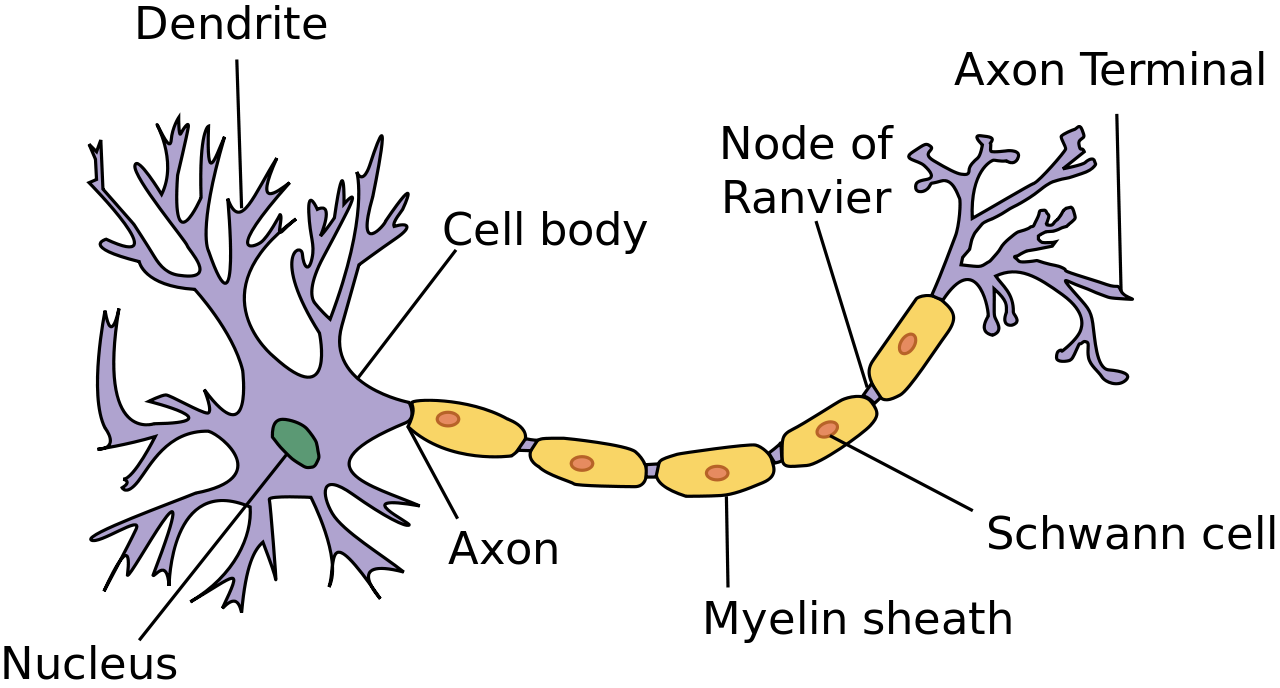

A motor neuron takes an impulse from the brain into the dendrites. These impulses are turned into one message stream, sent along the axon, and then split to the Axon terminals to transmit into the muscles. (A huge thank you to my daughter Grace, who has taken biology a lot more recently than I have.)

Livestream as Neuron

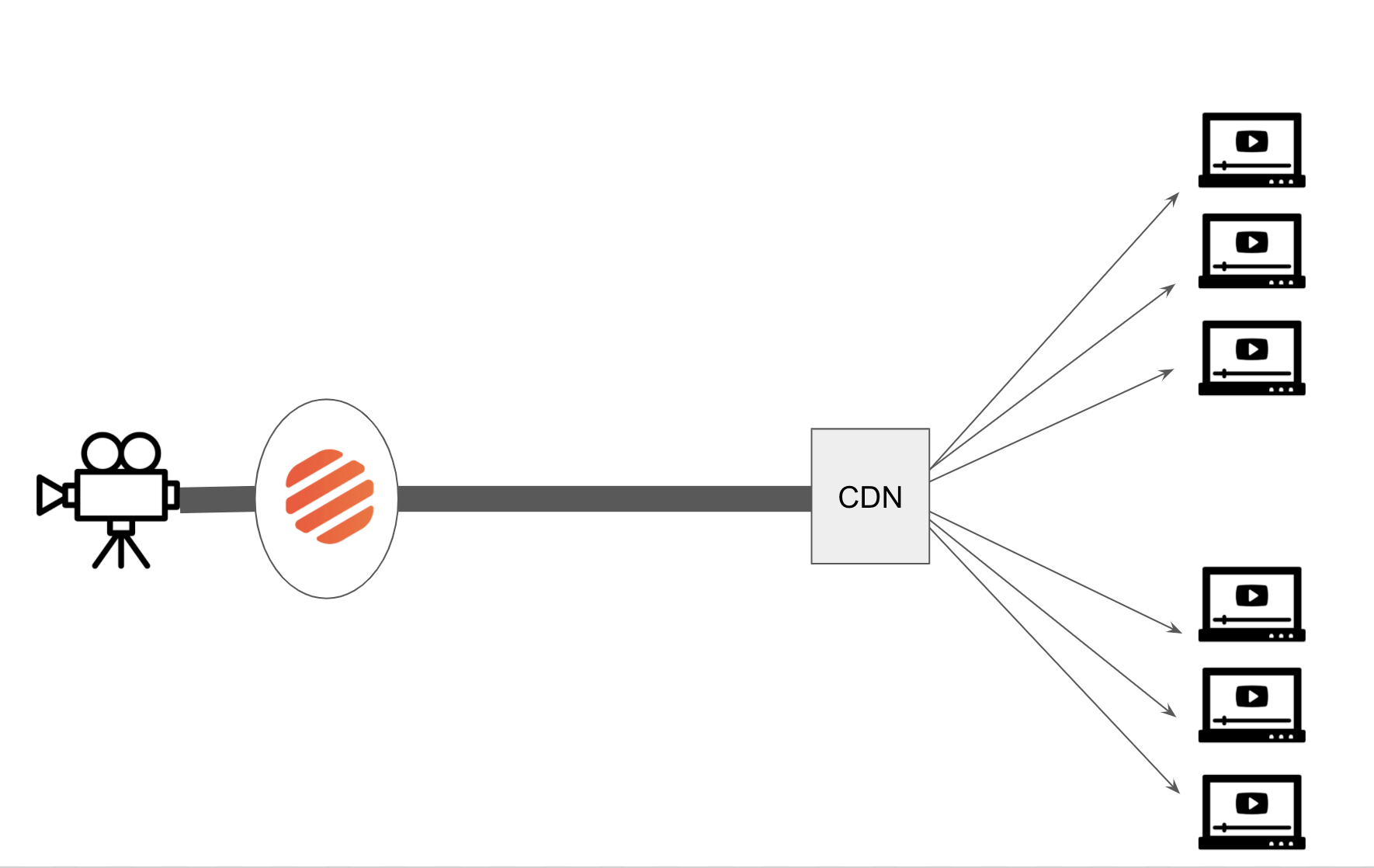

When you create a livestream at api.video, you are creating the 'neuron' that transmits video from your lens, modifies the signal to send out, and then passes the signal through the internet to your viewers.

In your body, the brain sends a signal to the neuron with chemicals called neurotransmitters to kick off the signalling process.

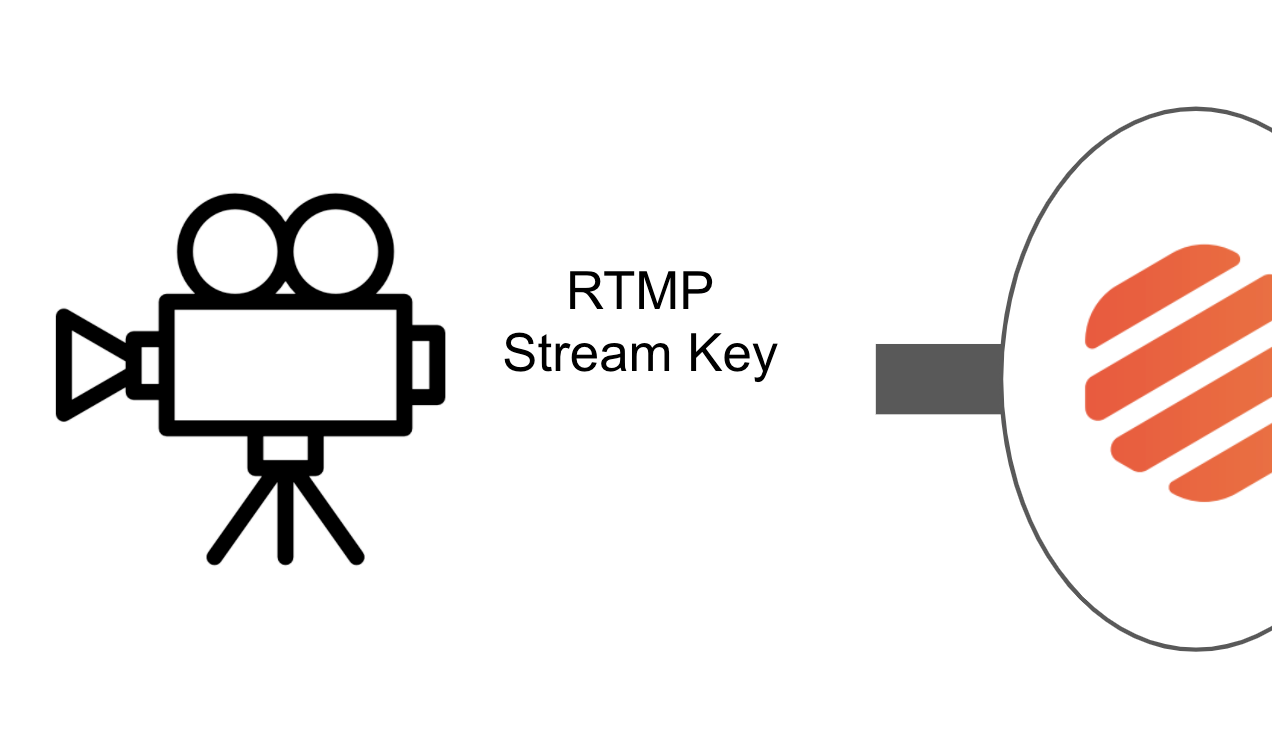

The "brain" of your livestream is your camera and video processing software on your computer. We have tutorials on how to use OBS, Zoom and a web browser to be the brains that connect with your stream. Your 'neurotransmitter' to connect to the livetsream is a streamKey and RTMP url (rtmp://broadcast.api.video/s) in which to begin the transmission of the video. RTMP is an industry standard (going back to Flash Video), known for low latency of video delivery. Flash no longer exists in the browser, but it is commonly used in video processing backends (like this one).

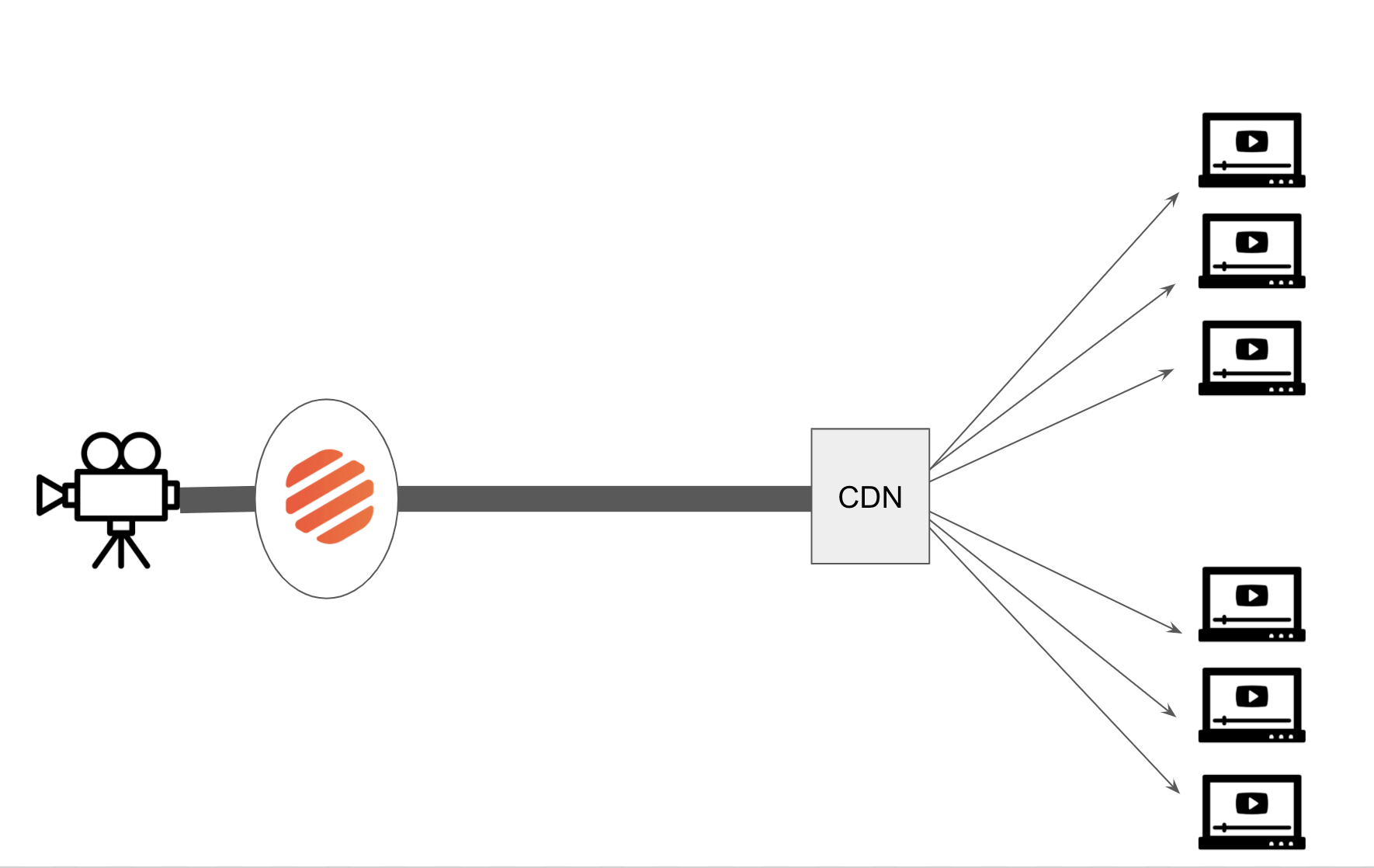

Once you have connnected your camera to the livestream, the video will travel the internet to api.video, undergo transcoding, and travel as single signal through the internet to a CDN. At the CDN, each segment of the livestream is cached, and sent on to each individual viewer.

On Livestream creation, you receive a link (and an iframe) url that describes the “other end” of the stream, where your many viewers can watch your stream. (it will look something like: https://embed.api.video/live/li400mYKSgQ6xs7taUeSaEKr).

Just like your muscles get signals from the same neurons over and over to contract and expand, you can reuse your livestream. The great part of reusing your livestream is that your settings remain the same in your recording setup AND for your viewers.

Digging deeper

For many of you, that may be all you want to know: hook up the pieces, and the video will stream. Like a neuron, data travels through the expected path, and it works without any concious effort. But let’s look a bit deeper into how the livestream works.

Once your video leaves your camera, it travels to the servers at api.video. Once it arrives at the api.video servers, our live transcoders begin the process of transforming the Flash Video into a HLS stream.

A HLS stream consists of several different sizes and bitrates to ensure proper delivery of your stream to end users. Additionally, each livestream is cut into in 2 second segments of video.

Did you know that if you stream 4K video to us, we’ll output a 4K stream to your viewers?

Each stream has a manifest file that lists upcoming segments. At the time of this writing (October 2020) each manifest lists the next 3 segments - for the next 6 seconds of video delivery (each segment consists of 2s of video). The manifest and the corresponding video segments are quickly transmitted to the many endpoints that are watching the video. As you might imagine, there is a new manifest file every few seconds from the server to each player, updating the list of segments.

Internet to the CDN

Once created and added to the manifest, the video segments are requested by your users all around the world. The segments leave api.video and travel through the internet to a Content Distribution Network (CDN) node. At the CDN, the segments are cached so that other viewers in that region do not need to go all the way back to the home server for the segments. From the CDN, the video continues the "last mile" to your viewers, where they can see the video. Here the path of each video segment becomes very branched, as the path to each user will vary.

Viewing

At the end of the api.video livestream, you use the provided player URL or iframe on your webpage. This url allows the video to leave the api.video 'neuron' and appear on your webpage or mobile app for your viewers to consume the video.

Player feedback

Unlike neurons, where information travels just one way, the connection between api.video and the the player is not a one-way pipe. As the player receives the manifest files - it can request different video bitrates to ensure smooth playback should there be changes in network connectivity.

Rewind

The manifest file lists the 3 newest segments of the video for playback. But there is a 2 way communication from the player to the server. If your users “rewind” the live stream, the player can request a manifest and segments for parts of the video that are in the “past." This means that you can watch earlier in the live video (up to 5 minutes from the live point) in our DVR mode.

Conclusion

A neuron fires information from the brain to muscles in the body instantaneously and efficiently thousands of times a second. Similarly, a livestream is the transmission of data between the streamer, through api.video and to your viewers in a fast, seamless, and efficient way.